There are so many issues on which people can be or become confused about whether their choice stance is political, economic, or moral. The problem with the third in this sequence is that it makes the choice/stance much clearer on the first two than may be desirable or convenient.

Further, confusion about the rights of others that allows for a seeming choice where none exists creates space for degeneracy – bigotry, victimization, persecution, false equivalence – which turn into their own rationales for unfortunately familiar behavior. It’s sadly too easy to see why attacks on vulnerable people are wrong, so the convolution helps cover otherwise indefensible actions and positions, from anti-trans attacks all the way up to capitalism itself.

The fear that capitalism would be so much less forceful and thus successful with limits on its rapacious character is so embedded as to be dogma. It must always be fully unleashed to exist at all. We equate force with success, which inures to all the resulting destruction. Collateral damage hardly makes a dent as a concept anymore and it remains unclear whether that manifests from the inability to see outside of one’s own interests or the casual acceptance of casualties, whatever their nature. Either way, it’s quite the evolution. We have yet to grapple with the actual consequences of zero-sum, much less the reality that ‘externalities’ get less and less external the further on with this we go.

The concept of ‘easy solutions’ feeds misdirection from guardians of the status quo, no matter their futurist garb. Positive-sum deflates fascism and environmental catastrophe with great collaboration and distribution, and its tools sit idle, though ready. One of the new tricks taught by every old dog is that opposite of love is not hate, but indifference.

One need not be a philosopher or a socialist to recognize the moral character of our actions. No special training or knowledge is required, only courage.

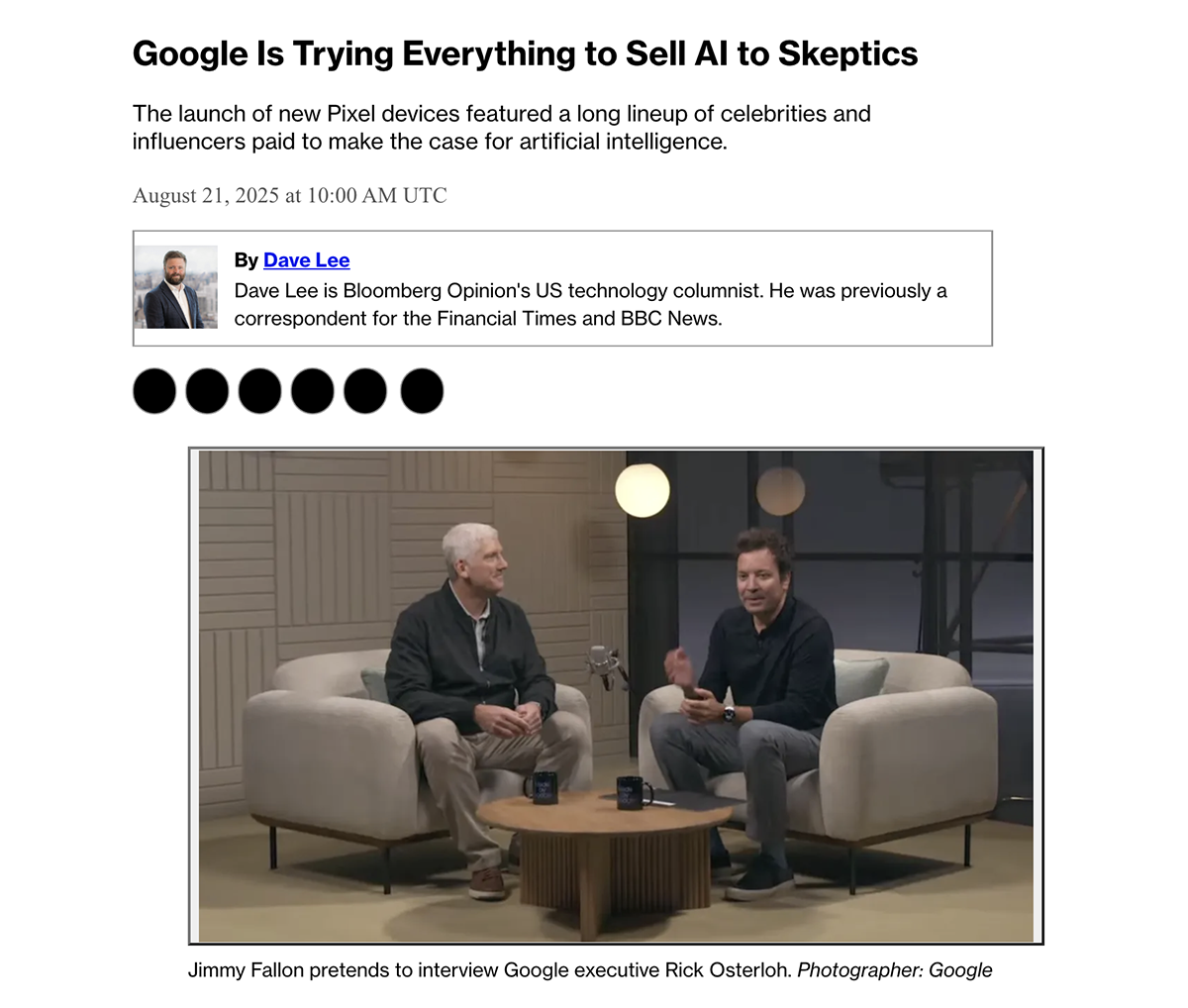

Image: Symbol for the chemical element Beryllium; elements are amoral but Be is a divalent element that occurs naturally only in combination with other elements to form minerals. Just sayin’.